NeighborEmbedding#

- class torchdr.NeighborEmbedding(affinity_in: Affinity, affinity_out: Affinity | None = None, kwargs_affinity_out: Dict | None = None, n_components: int = 2, lr: float | str = 1.0, optimizer: str | Type[Optimizer] = 'SGD', optimizer_kwargs: Dict | str = 'auto', scheduler: str | Type[LRScheduler] | None = None, scheduler_kwargs: Dict | str | None = 'auto', min_grad_norm: float = 1e-07, max_iter: int = 2000, init: str | Tensor | ndarray = 'pca', init_scaling: float = 0.0001, device: str = 'auto', backend: str | FaissConfig | None = None, verbose: bool = False, random_state: float | None = None, early_exaggeration_coeff: float | None = None, early_exaggeration_iter: int | None = None, repulsion_strength: float = 1.0, check_interval: int = 50, compile: bool = False, distributed: bool | str = 'auto', **kwargs: Any)[source]#

Bases:

AffinityMatcherBase class for neighbor embedding methods.

All neighbor embedding methods solve an optimization problem of the form:

\[\min_{\mathbf{Z}} \: - \lambda \sum_{ij} P_{ij} \log Q_{ij} + \rho \cdot \mathcal{L}_{\mathrm{rep}}(\mathbf{Q})\]where \(\mathbf{P}\) is the input affinity matrix, \(\mathbf{Q}\) is the output affinity matrix, \(\lambda\) is the early exaggeration coefficient, \(\rho\) is

repulsion_strength, and \(\mathcal{L}_{\mathrm{rep}}\) is a repulsive term that prevents collapse.This class extends

AffinityMatcherwith functionality specific to neighbor embedding:Loss decomposition: By default, the loss is decomposed into an attractive term and a repulsive term via

_compute_attractive_loss()and_compute_repulsive_loss(). When_use_closed_form_gradientsisTrue, subclasses implement_compute_attractive_gradients()and_compute_repulsive_gradients()instead. Subclasses that need a different loss structure can override_compute_loss()directly.Early exaggeration: The attraction term is scaled by

early_exaggeration_coeff(\(\lambda\)) for the firstearly_exaggeration_iteriterations to encourage cluster formation.Auto learning rate: When

lr='auto', the learning rate is set adaptively based on the number of samples.Auto optimizer tuning: When

optimizer_kwargs='auto'with SGD, momentum is adjusted between the early exaggeration and normal phases.Distributed multi-GPU training: When launched with

torchrun, this class partitions the input affinity across GPUs, broadcasts the embedding, and synchronizes gradients via all-reduce. Setdistributed='auto'(default) to auto-detect.

Note

The default values for

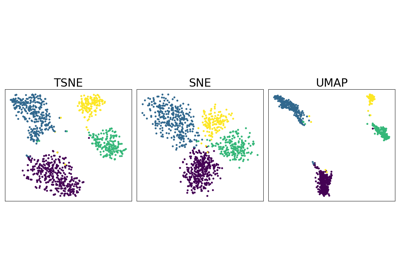

lr='auto',optimizer_kwargs='auto', and early exaggeration are based on the t-SNE paper [Van der Maaten and Hinton, 2008] and its scikit-learn implementation. These defaults work well for t-SNE but may need tuning for other methods.Direct subclasses:

TSNE,SNE,COSNE(compute the repulsive term exactly),TSNEkhorn(overrides the full loss),NegativeSamplingNeighborEmbedding(approximates the repulsive term via sampling).- Parameters:

affinity_in (Affinity) – The affinity object for the input space.

affinity_out (Affinity, optional) – The affinity object for the output embedding space. Default is None.

kwargs_affinity_out (dict, optional) – Additional keyword arguments for the affinity_out method.

n_components (int, optional) – Number of dimensions for the embedding. Default is 2.

lr (float or 'auto', optional) – Learning rate for the optimizer. Default is 1e0.

optimizer (str or torch.optim.Optimizer, optional) – Name of an optimizer from torch.optim or an optimizer class. Default is “SGD”. For best results, we recommend using “SGD” with ‘auto’ learning rate.

optimizer_kwargs (dict or 'auto', optional) – Additional keyword arguments for the optimizer. Default is ‘auto’, which sets appropriate momentum values for SGD based on early exaggeration phase.

scheduler (str or torch.optim.lr_scheduler.LRScheduler, optional) – Name of a scheduler from torch.optim.lr_scheduler or a scheduler class. Default is None.

scheduler_kwargs (dict, 'auto', or None, optional) – Additional keyword arguments for the scheduler. Default is ‘auto’, which corresponds to a linear decay from the learning rate to 0 for LinearLR.

min_grad_norm (float, optional) – Tolerance for stopping criterion. Default is 1e-7.

max_iter (int, optional) – Maximum number of iterations. Default is 2000.

init (str or torch.Tensor or np.ndarray, optional) – Initialization method for the embedding. Default is “pca”.

init_scaling (float, optional) – Scaling factor for the initial embedding. Default is 1e-4.

device (str, optional) – Device to use for computations. Default is “auto”.

backend ({"keops", "faiss", None} or FaissConfig, optional) – Which backend to use for handling sparsity and memory efficiency. Can be: - “keops”: Use KeOps for memory-efficient symbolic computations - “faiss”: Use FAISS for fast k-NN computations with default settings - None: Use standard PyTorch operations - FaissConfig object: Use FAISS with custom configuration Default is None.

verbose (bool, optional) – Verbosity of the optimization process. Default is False.

random_state (float, optional) – Random seed for reproducibility. Default is None.

early_exaggeration_coeff (float, optional) – Coefficient for the attraction term during the early exaggeration phase. Default is None (no early exaggeration).

early_exaggeration_iter (int, optional) – Number of iterations for early exaggeration. Default is None.

repulsion_strength (float, optional) – Strength of the repulsive term. Default is 1.0.

check_interval (int, optional) – Number of iterations between two checks for convergence. Default is 50.

compile (bool, default=False) – Whether to use torch.compile for faster computation.

distributed (bool or 'auto', optional) – Whether to use distributed computation across multiple GPUs. - “auto”: Automatically detect if running with torchrun (default) - True: Force distributed mode (requires torchrun) - False: Disable distributed mode Default is “auto”.

- on_affinity_computation_end()[source]#

Set up chunk_indices_ for the local GPU’s portion of the data.

In distributed mode, the affinity provides chunk bounds (chunk_start_, chunk_size_) so each GPU processes a different slice of rows. In single-GPU mode, the chunk covers all samples.

Examples using NeighborEmbedding:#

Neighbor Embedding on genomics & equivalent affinity matcher formulation